A Skill is a focused, simple app (or Telegram/Discord command) that does one thing well. No complicated menus or advanced settings. Just describe what you want and let AI handle the rest. Browse all available Skills at the Skills App … Read More

How to use Faceswap and Facepush in Telegram – Face Swapping AI in Stable Diffusion

Replace the face in any photo by swapping, or “push” a different face into a brand new render

You can create an unlimited number of AI images of your face for social media, or AI avatars for your friends with the AI FaceSwap and AI Facepush features of PirateDiffusion. They’re great for keeping a consistent face across many pictures without complicated prompting or building a model, anyone can do it. Here’s how:

Examples of AI face swap (your face + image)

|

|

| /faceswap mybro | |

|

|

| /faceswap niero | |

Example of a Facepush (your face + prompt)

FaceSwap and Facepush: What’s the difference?

As you can guess by the name, you can use our Telegram Bot to swap the face of one photo into countless others. In our implementation, we store the face as a “preset”, you can assign it an easy to remember name, and then targeting other photos with it. There are two ways:

- FaceSwap requires a finished photo, and the face is “swapped” into it. This is it’s own command, similar to /remix. Reply to a photo with /faceswap.

- FacePush is a parameter of /render. This means that you can prompt any realistic situation and “push” the face into that situation. It works similar to a one-shot Lora. Before using Facepush, you must have a ControlNet preset of a saved face, or a debug ID from a completed photo with a face.

Prepping

For best results, /facelift the image first. That will create an “ai version” that will sharpen up small images. If you prefer not to have the face retouched, add the /photo parameter like this:

/facelift /photo /size:768x768

You’re ready to faceswap and facepush by calling the Debug ID, but the ID numbers are of course hard to remember. Let’s give it a name.

Give it an easy name to remember

You can use the long debug ID string above as the name, or save it as an easier name to remember, like this:

/control /new:browneyedgirl

Now we’re ready.

FACESWAP

Step 1: Create a photograph, or paste a second photo to target

Step 2: Reply to the target photo with faceswap

We saved “browneyedgirl” in ControlNet, so we can recall the face anytime from now on:

/faceswap browneyedgirl

To control the effect, use the /strength parameter from 0.0 to 1.0

/faceswap /strength:1 browneyedgirl

There are more examples below with troubleshooting tips.

How to facepush

Facepush is a parameter of the render command. This command is amazing for creating fictional situations where you don’t have a target photo, putting the character in just about any situation.

You can use the same ControlNet preset name as faceswaps, like this:

/render /facepush:browneyedgirl a closeup portrait of a woman standing on a pier

/render /facepush:browneyedgirl Shrek’s wife in the forest

Troubleshooting

Why is my target photo sharp, but my faceswap came out blurry?

Hmmm… let’s take a critical look at your input photo. Can you we the pores on her face? Not really, no. So this will limit the quality of what Facepush and Faceswap can do. If you’re really shooting for realism, you’ll want to be able to see the skin pores very clearly.

But it’s good enough for a quick demonstration, let’s continue.

Consider this example:

Best Practices

- Use high quality photos with clear lighting

- Use photos with a minimum resolution of 768×768 to a max of 1400×1400.

- It’s better to use a bright, daytime input photo even when you are targeting night time or indoor renders. The AI doesn’t have trouble doing style transfer, but it will struggle if it can’t clearly read the input photos

- You can save an unlimited number of faces

- It works best when the angle and size of the face are similar

- When the input photo and target resolution are the same, the results are more crisp

- For higher quality results, create a lora instead

Limitations

- Facial hair matters. If you are going from no facial hair to a face that has facial hair, it may erase part of the beard. This can be added back in with Inpaint, but just an FYI.

- Facepush does not work with SDXL /remix or /more (yet)

- Facepush requires a realistic render prompt

- Add a model for better results. In the example above, we added

to support realism - The faces that you create in @piratediffusion_bot cannot be seen by anyone else

- You cannot use those faces in public groups. But you can make (separate) shared controlnet presets in group

- If the input photo isn’t well lit or low quality, you will get fuzzy edges

- It doesn’t work as well on anime or illustrations

- When the faces are already similar, the effect is more subtle. But it can be intensified or reduced using the strength parameter

- It will swap EVERY face in the picture

- Facepush

- does not work with SDXL /remix or /more (yet)

- requires a realistic render prompt

- Example /render /facepush:myfavoriteguy2 a realistic photo of ____”

Error Messages

- If you’re getting a “face not found” image, try doing a /facelift command on it. This will repair found faces and increase the size of the photo, two things that will help the next time you try /faceswap

- Try cropping the edges of the image so the face is more zoomed in, so it has less pixels to work with

- Try adjusting the brightness and sharpness of the image

- Try a combination of these things, with another /facelift after fixing the light and clarity

- Worst case, try a photo with a different angle

More Examples

Here’s PirateDiffusion’s lead developer with a pearl earring:

You can invite your friends into a Telegram group chat, program all of your faces, and roast each other:

One limitation of /faceswap is that it will target ALL of the faces, but you can use /inpaint to correct this

Will Smith Chungus Blooper:

/render /facepush:myfavoriteguy2 a man is hugging a giant chungus

Unlimited AI music generator is here + new /makesong Telegram command

Generate unlimited ai music with Graydient Pro and Plus, make AI music without tokens or credits, create AI music without restrictions today! There are various AI music models in our system, such as Ace Step XL and Heart Mula. WebUI: … Read More

Edit videos and photos in your browser with Graydient Studio

Graydient Studio is here. Graydient lets you generate video, images, and music. Now you can put them all together too, right in your browser. Security camera footage from inside a cramped bodega, grainy CCTV quality with timestamp overlay in the … Read More

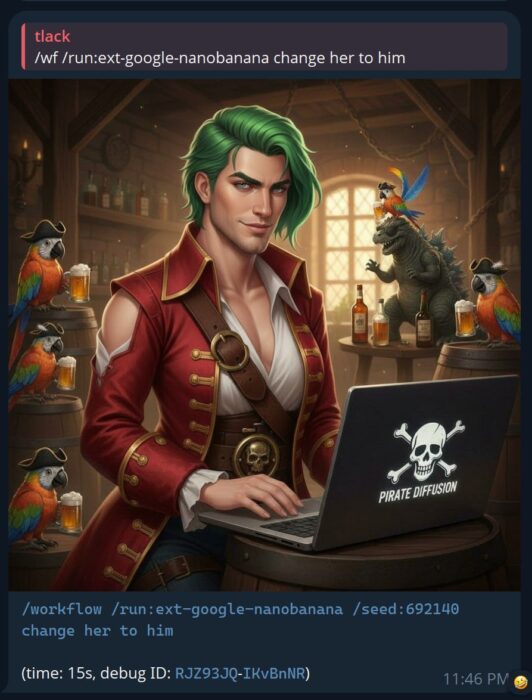

For your convenience, you can now bring your own External API keys for FAL and Google

Important Note: This is an external paid service, not related to Graydient’s Unlimited Plans. You should first have a membership with FAL and GOOGLE to use this. Graydient now supports external workflows — including Kling 3 video and Google’s Nano … Read More

New feature: Install and use LoRA models privately

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai You can now install the models you … Read More

Unlimited AI video generator is here – no tokens! Wan 2.2, SkyReels, HunYuan, LTX and more

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai We’re proud to announce unlimited video generation … Read More

New models, new website, new dashboards

Community Update Hey, it’s Captain Freeman from our Telegram chat group, moving your cheese, changing your dashboards. Our platform is getting bigger (10,000+ models!), so we’ve spruced things up to make room for even more. This is the new dashboard … Read More

ComfyUI workflows are now available via Graydient web apps and API

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai https://youtu.be/66kky0CPDBU Import your favorite workflow, run it … Read More

FLUX, StableDiffusion 3.5L, Pony, Illustrious and more – Unlimited AI images and LLM service

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai https://youtu.be/ocTtqrqzF7A Enjoy unlimited FLUX and Stable Diffusion … Read More

PollyGPT 3 Released, available via API, too. Train your own bots

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai Great news, everyone! PollyGPT V3 is here … Read More

Try our vastly improved WebUI, reimagined from the ground up

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai Try our new unified editor! We heard … Read More

New Product Launch: Train your own Image models with LoraMaker

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai We’re thrilled to announce LoRaMaker.ai – a … Read More

More payment options for Creative Suite plans now available

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai By popular request – you can now … Read More

Background removal and After Detailer have arrived

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai For API users, Stable2go WebUI and PirateDiffusion … Read More

New Samplers are now available across the platform

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai By request, the rapid-fire LCM samplers are … Read More

New VAE, HDR, and Latent Space controls added

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai For the discerning AI images creators that … Read More

HDR Color correction in Stable Diffusion with VASS

What is VASS and HDR color and composition correction? VASS is an alternative color and composition for SDXL. To use it, add the the /vass parameter to your SDXL render. Whether or not it improves the image is highly subjective. … Read More

How do swap the VAE in runtime in Stable Diffusion

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai What is a VAE? VAE is a … Read More

How to use FreeU in Stable Diffusion with PirateDiffusion

How to use FreeU to boost image quality FreeU is a simple and elegant method that enhances render quality without additional training or finetuning by changing the factors in the U-Net architecture. FreeU consists of skip scaling factors (indicated by … Read More

What does Arafed mean in Stable Diffusion? ShowPrompt and the Describe command

What does Arafed mean in Stable Diffusion? ShowPrompt and the Describe command Ah yes, the mysterious Arafed. You might see this in prompt outputs or when using our /describe command “The word “arafed” appears to have multiple meanings in English, … Read More

PirateDiffusion Challenges

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai Update: Congratulations to our winners, Tomato (below) … Read More

PirateDiffusion Content Guidelines

Be cool. These are general guidelines for your creations in public groups and private spaces as well. This is an evolving document based on community feedback. … Read More

Stable Diffusion XL models are here! ✨

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai You can try Stable Diffusion XL (SDXL) … Read More

Request a model install

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai Are we missing a model? We can … Read More

Free alternative to ChatGPT – Introducing PollyGPT LLM

Anniversary Update Pirate Diffusion turns one To be honest, we weren’t sure if anyone was going to show up, but we’re so happy that you all did. We’ve met so many great creators and it’s a joy to see all … Read More

PirateDiffusion turns one, PollyGPT announced

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai On August 22, 2022, PirateDiffusion launched as … Read More

Side Hustles welcome: Introducing Graydient Affiliates

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai Introducing our Partner program – open to … Read More

We want your feedback: Join our roadmap

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai We’ve redesigned all of our product pages … Read More

We’re Art Basel @ Black Dove Gallery

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai Graydient is powering an interactive Generative Art … Read More

Cloud Drive update: You can add now favorites

Heads Up! This page is over 30 days old, and may not reflect the newest features of our software. For the latest news, workflows, and prompt engineering discussions, VIP member’s channel on https://my.graydient.ai Concept Faves are now available in My … Read More