Edit – Using Instruct Pix2Pix

Editing images with your words

It may be tricky to go straight into this if you’re not already versed with creating images, as well as uploading and remixing them, so please check those videos first, and then try edit last.

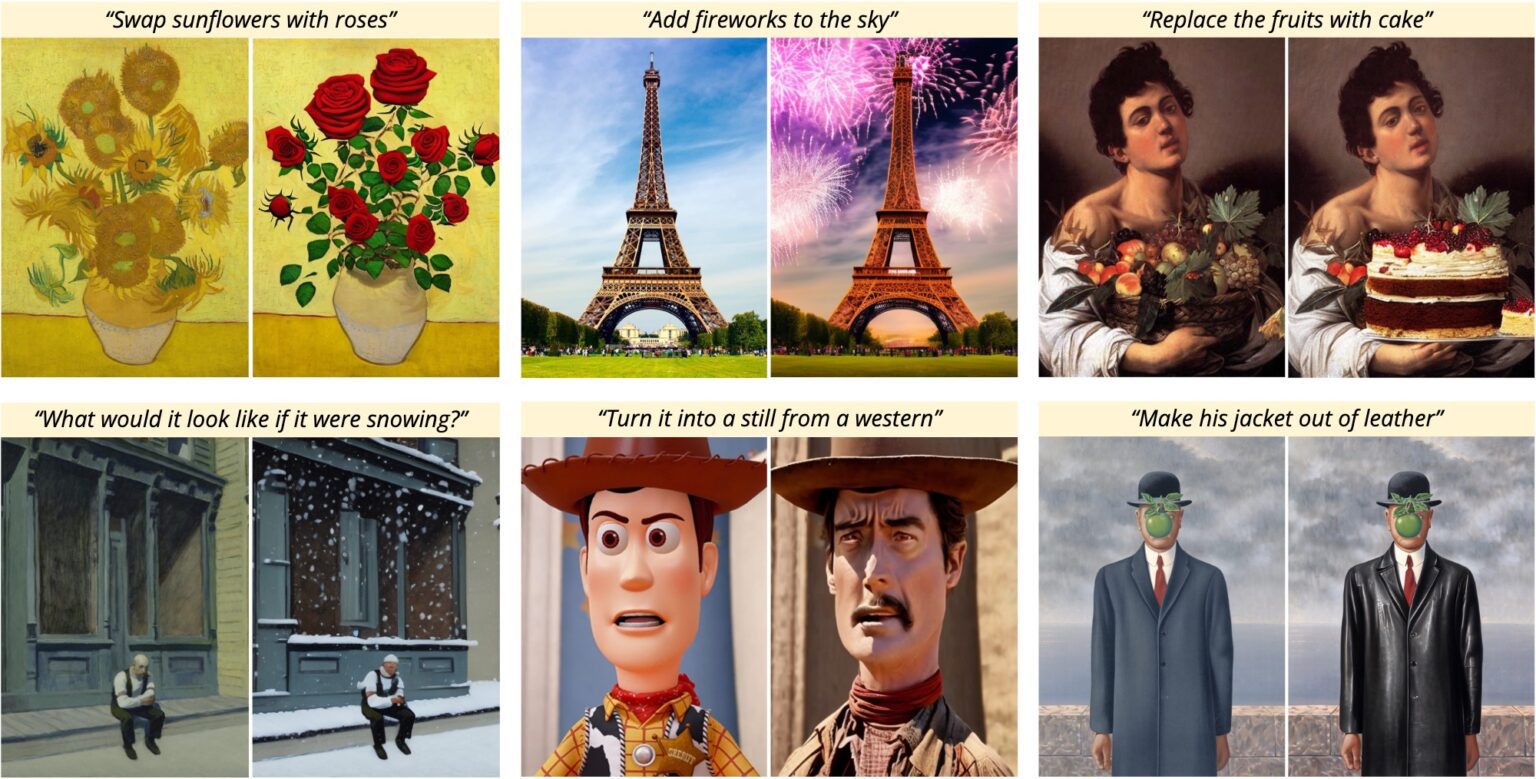

The image below summarizes what Edit can do, in a nutshell. It is a powerful algorithm that understands natural language, so you can give it orders or ask hypothetical questions and see your ideas come to life in seconds.

WHICH MODE SHOULD i CHOOSE?

Remix – Use /remix for dramatic changes to the ENTIRE image. You are going from one image to another completely different one, a technique which is more commonly known as img2img.

Remix excels at style transfer because you can target the unique aesthetic style of one of our AI models. Or you can work within one style, but with remix you will use prompts (as in tags) instead of trying to express changes with natural language.

Edit – Use /edit when you don’t completely want to transform the subject. So you are basically keeping the same image, such as when you don’t want to change someone’s face.

Edit is based on it’s own AI model that was trained with Chat GPT3, so it understands basic questions and verbs. This technique is known as Instruct pix2pix. Unlike Remix, we cannot call a different AI model while using edit. This model is 512×512 resolution, so downscale your input for best results. After editing, use our upscaler to boost the fidelity with AI.

QUICK REMIX REVIEW VIDEO:

Edit has three limitations:

As edit uses its own internal AI model, meaning that is locked in a certain aesthetic. And that’s really the big difference between remix and edit at the time of this video. With Remix, you’re choosing from hundreds of our preloaded styles and reprompting into them. Edit’s model is its own.

The other limitation is resolution. You can upload your own photos and edit them, but note that the output will be a lower resolution 512×512. So prepping your input image to 512×512 will be a lot more stable, and you’ll be less likely to run into issues. Edit uses a lot of VRAM, so to avoid issues try to avoid unusual aspect ratios. Images along the lines of 512×832 or 640×512 should also be ok, something along those lines. But probably not 1024×512.

When those limitations are respected, edit truly shines. Changing the background, the lighting, the color, and the textures of some elements of images, and altering very specific parts of the photo.

How to use Edit

Upload an image from your phone, or render one first:

A render returns multiple images, so we need to select the one we want to edit first. Edit can only alter one image at a time, so let’s pick one.

Select the picture by replying to it, as if you were going to chat with the photo.

And in the reply box, ask a question in the most specific way possible:

/edit what if her hair was blue?

Use a verb in your prompt, and asking a question can sometimes lead to better results than barking a command. In both cases, be specific.

Remember: Edit does not use

You can also use positive and negative prompts to put more emphasis on what is important.

/edit change her hair to the color ((blue))

And those are the edit basics! Take a break here and try it.

Pump the brakes with /strength

In this example below, it did comply to add burning buildings, but didn’t know where to stop, and we lost a part of the hat. This can be fixed.

TONING IT DOWN

The power of /edit is controlled by a parameter called /strength. Strength is set to 1 by default. Very low strength is 0.05, very high strength is 0.99.

How to fix the above example:

/edit /strength:0.5 What if the buildings behind his hat are on fire?

Here we are saying don’t touch the man or hat, and don’t use full force.

ENGLISH IS HARD

Sentences that have multiple meanings really trip up the AI.

Asking “what if she had nice hands” can also literally mean “What if she was in the possession and/or general company of many hands that are nice”, because English is hard. It doesn’t help to switch from “have” to “is/are”. Two extra sets of hands are more likely to appear, at least in the first release.

For hands, fix those notoriously troubled spots with inpainting instead.

On nudity and the edit command

Edit can do lewds if you are very determined, but don’t expect much.

The reason is, edit uses Instruct Pix2Pix, its own AI model — a lower resolution 512×512 that was not trained on pornography, so therefor it cannot excel at pornography on its own, unlike a very explicit model like Hassansblend, which has specific tagging for the various carnal acts.

Instruct Pix2Pix is a summer child, clueless of many such adulteries.

You’re much better off trying inpainting or remixing into an adult AI model like URPM, which will change the face… and that’s probably for the best.

Edit can be difficult to control at first, but it’s also a wonderful tool that you can . These are the very early days for this technology, so please set your expectations accordingly.

KEEP LEARNING:

Introduction to Brew Positive/Negative prompts AI upscale your images Fine-tune with Render Upload and edit images Change visual styles Make realistic AI people Commands & troubleshooting

- Try it risk-free for 7 days

- Instant cloud Stable Diffusion (18+)

- Web app and powerful chat bot

- Unlimited renders, img2img, control net

- Upload your photos, edit with AI

- Unlimited AI upscale, 3 unique modes

- 1TB+ of pre-installed, popular models

- 15GB cloud storage: sync art, prompts

- Access to Pirate Diffusion community

- Live prompting groups and discussions

- Creator-friendly commercial license

- VIP live chat support 24/7